2023

Navigation

Jan - Feb - Mar - Apr

May - Jun - Jul - Aug

Sep - Oct - Nov - Dec

Resources

C++ Initialization 1/5/2023

What is a Semiconductor? 1/14/2023

Static Variables in C 1/15/2023

Memory Layout of C Programs 1/15/2023

What is constinit in C++ 1/18/2023

Understanding constexpr in C++ 1/18/2023

Lecture on constexpr 1/19/2023

C++ Graph Implementation 1/21/2023

More on Graphs 1/22/2023

What is a Git SSH Key? 1/22/2023

GitHub SSH Key Fingerprints 1/22/2023

Character Strings in C 1/25/2023

adafruit PIR Motion Sensor 1/25/2023

Learn DSA 1/27/2023

Time Complexity Examples 1/27/2023

Circular Linked List Split 1/29/2023

Sort a Matrix Problem 1/31/2023

The Evolution of CPU Processing Power 2/6/2023

Programming a Raspberry Pi Pico with C or C++ 2/6/2023

Tips for Embedded Study 2/10/2023

Compute the Integer Absolute Value Without Branching 2/14/2023

Bit Twiddling Hacks 2/14/2023

madewithwebassembly.com 2/19/2023

UART Protocol Explanation 3/1/2023

Magnetic Core Memory 3/6/2023

How Transistors Work 3/6/2023

7 Best Artificial Intelligence (AI) Courses for 2023 4/10/2023

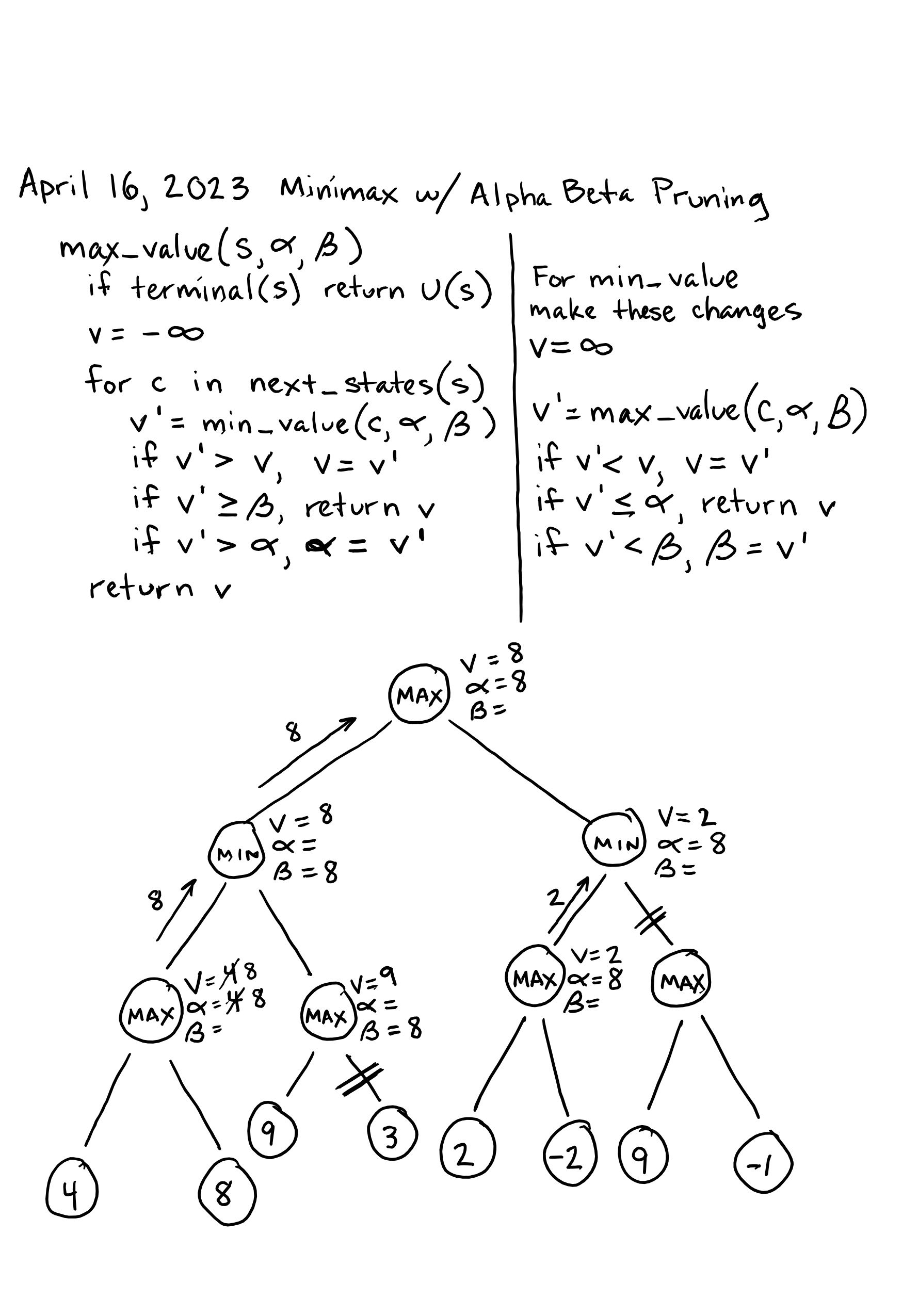

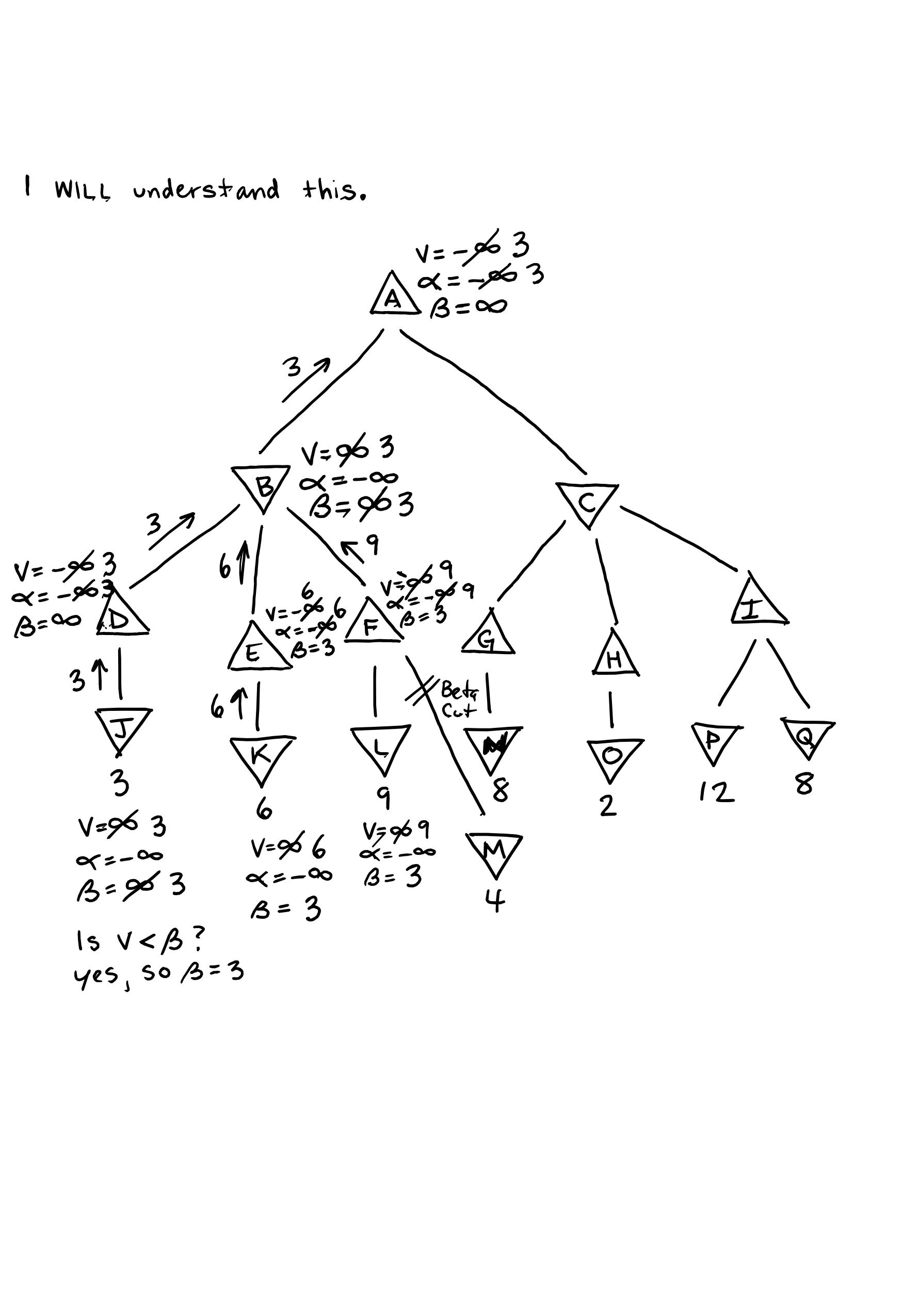

Minimax With Alpha Beta Pruning 4/16/2023

Alpha Beta Pruning / Cuts 4/16/2023

Project CETI 4/25/2023

ChatGPT Release Article 5/2/2023

Tree of Thoughts Academic Paper 5/20/2023

Multi-Dimensional Data (as used in Tensors) - Computerphile 6/4/2023

Tensors for Neural Networks, Clearly Explained!!! 6/4/2023

How to implement KNN from scratch with Python 7/8/2023

IBM: What Is Gradient Descent? 7/19/2023

An Introduction to Linear Regression Analysis 7/19/2023

How to Calculate Linear Regression Using Least Square method 7/19/2023

Gradient Descent Algorithm — A Deep Dive 7/19/2023

Google Colab - 10 Tips 8/27/2023

Importing Kaggle Datasets into Colab 8/30/2023

Documentation For scipy.signal.spectrogram 9/7/2023

Heap Sort Explanation - GeeksForGeeks 10/10/2023

C++ Friend Functions - tutorialspoint.com 10/10/2023

Image Annotation - makesense.ai 10/23/2023

Hugging Face Dataset Walkthrough 10/23/2023

Instance Segmentation vs Object Detection 10/23/2023

Ultralytics Documentation 10/29/2023

Fix Image Rotation on iOS 11/2/2023

My Programs

adjacency_list1.cpp 1/22/2023

adjacency_matrix1.cpp 1/22/2023

circular linked list 1/28/2023

Sort A Matrix 2/1/2023

Stack 2/2/2023

func_ptr.c 2/9/2023

mergeSort1000 2/11/2023

sfmlPractice0 2/20/2023

sfmlPractice1 2/22/2023

1/1/2023

Code - Book

Read more of chapter 17, “Automation”. I’m learning about how an array of “instructions” such as Load, Add, Store, and Halt can be applied to a data array in order to perform arithmetical operations. I can see where this is going: the book is barely scratching the surface of assembly code and showing how it interacts with data.

Linux Pocket Guide

Learned how to examine the permissions of a given file or directory: ls -l myfile the resulting line will look something like this -rwxrwxrwx username username timestamp the 10-char string at the beginning tells what type of list element it is with the first char and then the remaining nine chars show what permissions three different groups have. In the example above, all groups can read, write, and execute.

1/2/2023

Mastering Data Structures and Algorithms

I can see that this course would be very instructive, and I foresee that I will use it in the future, but I think it might be the wrong choice for the nonce. I realized I’d like to explore C++ more deeply before taking a course solely dedicated to data structures and algos.

C++20 Masterclass - Udemy

I’m excited about this course. I hope I can complete it in about 3.5 months, but we’ll see! I watched sections 1 through 4. The instructor advised us to watch the environment setup videos for every OS, even if we weren’t using a particular one. This way, we could learn how it is done on each. Ultimately, I got VSCode set up on my Linux machine. We used the terminal to check whether GCC and Clang were installed, then we installed them if they weren’t already installed, then we edited the tasks.json file in our project folder to allow us to easily build with either compiler, and finally we told VSCode to use the C++20 standard in a file called c_cpp_properties.json, which was created after accessing the command palette and entering C/C++:Edit Configurations (UI).

1/3/2023

Code - Book

Currently reading the section of Chapter 17 on making the adding machine more flexible. Now, we include 2 bytes with every instruction opcode to tell the CPU where to perform the instruction. This is more flexible than using two contiguous arrays (instruction array and data array) that must be always synchronized, instruction memory address to data memory address. I find all this very fascinating and I'm eager to make it all more intuitive as I continue studying!

C++20 Masterclass

Finished watching sections five and six. I'm glad I did because they helped solidify the VSCode setup process. I like the idea of having a template project folder that I can simply cp template_project and mv template_project project_name. I am eager to get fully underway with this course, but I'm trying to make sure I don't overwork myself. Slow and steady wins the race, and I'm racing myself.

Bare Metal C

I went through the earlier part of Chapter 3 and refreshed myself; key terms are HAL (Hardware Abstraction Layer) and GPIO (General Purpose Input Output). Since I had already written the demo program last time, I simply reviewed it to make sure I understood every part of it. I’m pleased that this book goes so in depth on the build process, compiling and linking. It’s possible that I will breeze through some parts of this book since it is geared toward beginner C programmers, but I know there will be many new concepts scattered throughout.

1/4/2023

C++20 Masterclass

I worked through all the videos in Section 8. Much of it was review for me, but I still sensed the value in it. Also, it helped to increase playback speed where I already completely understood what was being taught. My biggest takeway was the difference between Core Features, Standard Library and STL (Standard Template Library). The instructor did not go in depth, but I know these categorical distinctions will be useful later on. The demonstration of the getline() function was also useful. This is how we used it:

std::string name;

getline(std::cin, name);

1/5/2022

C++20 Masterclass

Watched and worked through section 9 videos 39 and 40. Nearly all of this material is review, but once again, I just increase playback speed and skim through them. Occasionally, some simple new concepts have emerged, which is the main reason I don't want to skip anything.

Yes! Video 41 presented some new material and it all checks out with stackoverflow. The main takeaway from this video was three types of initialization: braced, functional, and assignment. Braced initialization is the preferred method since it does not allow the data to be altered or truncated if the variable is mishandled. This post on stackoverflow.com explains the concept further - they call it list initialization.

I also watched video 42 and it was also helpful. This is going to be a great course.

1/8/2022

Thoughts

I'm chipping away at this C++ course and really enjoying it! However, I've been feeling discouraged since I'm not working on any projects and I've been unsure of what I should be working on. Today I decided to do some research and find out what interests me. First, I looked at Socket Programming, but I think I found that it is merely a component of the larger topic of web programming, a field that I've thought about exploring more. The trouble is that web programming doesn't seem to excite me very much (of course that could change as my experience grows).

After more browsing, I found the topic that sparked immediate interest IoT (Internet of Things). It includes all the things I think I like: embedded systems, working with C/C++, low-level programming, understanding how data is transmitted over networks via protocols, etc. I might look back at this and chuckle at my obvious inexperience. Anyhow, I need to find what I'm interested in and lean that direction. I need to not set up artificial barriers for myself!

C++20 Masterclass

I completed the video 43, fractional numbers. Then, I wrote a practice program, implementing a pythagorean theorem function using some of the principles (braced initialization, setprecision, default arguments) I've learned.

Video 44, on boolean values, gave me the idea to store multiple boolean values in 1 byte. I wrote a program to do just that.

I finished the rest of Section 8. I found the auto keyword the most significant: it allows us to use C++ in a more-or-less dynamically-typed fashion. I have yet to see where this keyword is most useful, but I'm sure I will in the coming lectures.

Bare Metal C

I finished chapter 4. The main takeaways were from exploring the files created by the IDE at the creation of the project and from using GDB, the debugger. This chapter helped me appreciate just how much goes on under the hood, all the preliminary steps and initializations that must occur just so I can write my tiny LED-blinking program. HAL_init() caught my attention the most since it has a catalog of hardware architectures from which it initializes the hardware with the appropriate data. If you can’t tell, I don’t fully understand it yet!

1/9/2023

Research

I'm exploring potential specializations in technology, and IoT and TinyML currently stand out the most. At the moment, I can't decide between the two!

I went on LinkedIn to add my certificate from the C Programming Udemy class, create a profile summary that explains what my current job is and how I'm preparing for the future by studying Computer Science, and update my CS50x certification with the verified certificate, which I received a few days ago!

C++20 Masterclass

Completed lectures 49 through 51. This stuff was pretty basic! At the end of the lecture on Precedence and Associativity, the instructor explained that a programmer should not rely on the precedence table too much. That is to say, put parentheses in your expressions to make your intentions explicit. This will increase readability and decrease the potential of mishandling data.

Bare Metal C

Began working in chapter 4. The first part of the chapter is mainly about data types, so it is just review. Later in the chapter, I see that we’ll be getting to some content that should fill in some knowledge gaps for me.

1/10/2023

C++20 Masterclass

Sections 52 - 55. Topics covered: Increment Operators, postfix and prefix; Compound Assignment Operators (+=, -=, *=, /=, %=); Relational Operators (<, >, <=, >=, ==, !=); Logical Operators (&&, ||, !). Once again, this is pretty much all review. I’m glad to go over it, but I’d really like to get into the new stuff more.

1/11/2023

C++20 Masterclass

Sections 56 - . Video 56 was quite long, but very useful. It covered output formatting with demonstrations of several functions and manipulators that can be applied to std::cout to alter how the data is displayed in the console.

57 was on the numeric_limits class, which is found in the std::numeric_limits\<unsigned int\>::min() and std::numeric_limits<unsigned int>::max(), we are able to see that the unsigned int data type indeed goes from 0 to over 4 billion.

The weird integral types lecture explained that the int data type is the smallest type with which we can perform arithmetical operations. This is due to the design of the processor. If we take two chars or two shorts and add them, the result will be automatically cast as an int; in order to do this, the compiler has to convert each variable to int beforehand.

With that, I've completed section 9! That's all for today with this course.

Bare Metal C

I read a little more of chapter 4, and it was mostly review again. This is becoming a bit of a trend with my classes. Perhaps I need to be less of a completionist and know when to skim. I’m fine with being a completionist with the C++20 Masterclass, but with this book, I ought to start skimming ahead to content that is new.

The section Standard Integers in chapter 4 was actually very enlightening. The C standard only defines the integral types relative to each other (short is smaller than int is smaller than long). However, on some system, int is 2 bytes, and on others, it's 4 bytes (more common). To deal with this unpredictability, fixed-width data types were added with the stdint.h library (int32_t). These guarantee a specific width (conditional compilation). However, we can still run into something called argument promotion, which is where a data type like int8_t is promoted to int32_t to accomodate a value which cannot be stored in the smaller width. In embedded programming, we don't want data types being manipulated under the hood. We want total control of their width, so that our program runs exactly as intended. Thus, it's better that the value overflow than get promoted.

1/12/2023

Bare Metal C

I definitely spoke too soon with yesterdays first entry for this book. I'm learning some awesome things now. Chapter 4, starting from Shorthand Operators, started to challenge me: it seems to explain that increment and decrement operators should only be used on their own lines of code, not like this while (arr[++i]);. This seems to be perfectly legal C; I'll have to investigate further. Then, we got into Memory-Mapped I/O Registers Using Bit Operations. Here the book introduced us to bitwise operators. I copied down the program on pages 73-74, and It found it probably the most elucidating part of the chapter. Finally, I answered all the questions at the end of the chapter. For question 2b, I found that I needed to clear any values that might be present in the parity bits before reassigning them to a different value:

// clear Parity

ledRegister &= ~Parity; // Parity is 0b00001100

// set Parity to 2

ledRegister |= 0b00001000;

1/13/2023

C++20 Masterclass

Lecture 62 on Literals. I thought I knew exactly what literals were beforehand, but this lecture gave a more nuanced understanding: at the moment, I would describe a literal as any value that is stored in the program binaries after compilation. In other words, if I have a program that takes input from the user,

int not_a_literal;

std::cout << "Enter an integer: ";

std::cin >> not_a_literal;

std::cout << "You entered " << not_a_literal << std::endl;

then I could use a non-literal (not_a_literal). This, int literal;, must be a literal. QUESTION: if a variable is uninitialized, does that make it a non-literal? I think the answer is yes.

[EDIT from 1/28/2023] My understanding here is incorrect. A literal is in fact simply the value used to initialize a variable.

1/14/2023

Bare Metal C

Today was a light day, but I did manage to get a little study in. I read quickly through the first 6 pages of chapter 5 since it was entirely review. It was all about loop and conditional statements. I stopped just before the section entitled "Using the Button"; I'm fairly certain this section will provide me with new material to digest.

Miscellaneous

I realized I don't have a full understanding of the semiconductor, which is a key component of the modern computer (if not THE key component). I pulled up a tab, What is a Semiconductor, and did a cursory read through. I fully intend to revisit this topic soon.

1/15/2023

Bare Metal C

Completed chapter five today, though I've yet to solve all the problems at the end. Here are the main takeaways from Chapter Five starting from the section entitled "Using the Button":

- We were shown three types of input circuitry: pullup, pulldown, and floating. These all have to do with how current is directed before closing the circuit and how it is redirected after closing it.

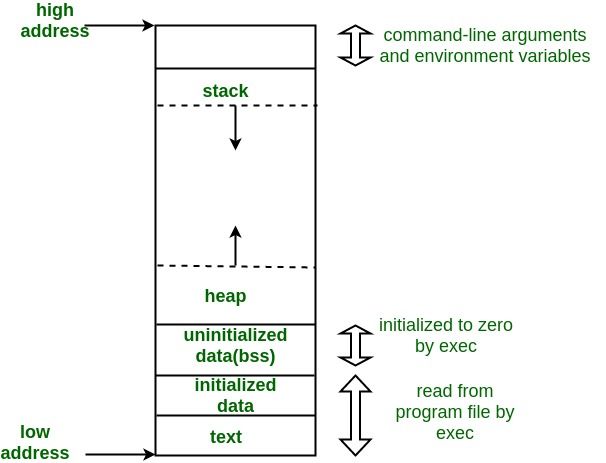

- When analyzing the code in "The Break Statement" section, I noticed the static keyword and I wanted to refresh myself on its purpose. I found an article on GeeksForGeeks that explains static allocation very well. From there I found another article on the memory layout of C programs. I found the following image very helpful:

BSS, the block of memory where static variables are stored, stands for "Block Starting Symbol". The static keyword causes a variable to be stored in the .bss segment of memory; furthermore, it can be used to make a variable global even if it is declared within some local scope.

1/16/2023

Bare Metal C

It took me far too long to solve chapter 5 problem 1; it's because I was trying to minimize the number of variables and use only two for loops, printing the product of their iterator variables. I completed the program, but I needed to use one initial for loop to set the column headers (X 0 1 2 3 ...).

I solved problem 2 much quicker and had a lot more fun! I enjoy anything that involves bitwise operations; it feels sneaky. For this problem, I had to write a program that could count the number of bits set to 1 in a 32-bit unsigned integer. I did it by running a for loop 32 times while ANDing the number against a 32-bit test value (0x80000000), only the most significant bit set to 1. If the result of the AND is equal to the test value itself, then a count variable is incremented. This is how I counted the number of bits.

I solved problem 3 this evening. I'm proud of my solution: I was able to make my program more user-friendly by using familiar musical jargon. All the user has to do is specify the TimeSignature and Tempo and then populate the uint8_t array with the beats on which they want the light to flash.

1/17/2023

Bare Metal C

Solved problem 4, which asked me to write a program that blinks out "HELLO WORLD" in Morse Code on the Nucleo board LED. Once again, I took it a step further and gave the user the ability to easily change the message as they like. I did this by implementing a Morse Code alphabet matrix and a simple hash function. The user simply types their message into a string literal using only uppercase alpha chars and spaces. The program then interprets this at Morse Code.

Problem 5 tasked me with writing a program that computes the first 10 primes. I wrote a program that computes primes up to an upper bound of 0xFFFF (the maximum value as can be stored in uint16_t). To reduce time complexity, I used an array that remembers the prime numbers we've already computed and uses only the necessary amount of them to compute the next prime in the sequence. Unfortunately, the space complexity had to increase for the time complexity to decrease; there may yet be a further way to optimize this code, but I'll leave it here for now.

1/18/2023

Bare Metal C

Solved problems 6 and 7. These were very easy, so I spent most of my time making them as clean looking as possible. Problem 7 asked me to write a program that prints only the vowels from a string. I'm pleased to say that after working with ASCII codes so much now, I was able to recall the codes for the vowels, both upper and lowercase, from memory.

C++20 Masterclass

Completed lectures 63 through 66. They were all about "const", "constexpr", and "constinit". I understand const very well at this point: it allows the programmer to make it very clear that the variable should not be altered - in fact, it can't be since the compiler will throw an error if it is altered. CONST makes a variable read-only. CONSTEXPR allows us to specify that a computation should occur at compile time instead of runtime (I still need to better my understanding). CONSTINIT "...ensures that the variable is initialized at compile-time, and that the static initialization order fiasco cannot take place (source). Also, I found an article on geeksforgeeks.org that helps clarify what "constexpr" does and how it is different from "const".

Linux

I wrote a bash script that streamlines the process of updating the driver for the wifi dongle connected to my desktop. Here's the script:

\#!/bin/bash

\# A script that streamlines updating the cudy wifi dongle

cd /home/seandavidreed/cudy_driver/rtl88x2bu_linux

sudo make

sudo make install

sudo modprobe -r 88x2bu

sudo modprobe 88x2bu

1/19/2022

Linux

I wrote another bash script; this one is very simple:

\#!/bin/bash

cd /home/seandavidreed/my\_programs/studentlog4.0

./studentlog

It’s just an easier way to launch my studentlog console application. Now I just open a console and type in studentlog.

C++

I watched this video on the constexpr keyword, and it was very helpful! Here’s the notes:

- Why is constexpr interesting?

- No runtime cost, no need execution time, minimal executable footprint, errors found at compile time, no synchronization concerns.

constexpr int const\_3;

// is interchangeable with

static const int const\_3;

// but constexpr works with float, for example

// while static const does not.

The video had some examples that were beyond my ken, so I skimmed the latter half of it.

1/20/2023

C++20 Masterclass

I completed lectures 67-69. They were on implicit and explicit data conversions, implicit conversions as with:

int sum {};

double x {4.56};

double y {5.67};

sum = x + y; // the value will be truncated and stored in sum as 10

and explicit conversions as with:

int sum {};

double x {4.56};

double y {5.67};

sum = static_cast<int>(x + y); // same result as before, but explicitly so

C++ Practice

I decided to give myself some meaningful programs to write in C++ so I can apply what I'm learning. I've been reading up on the graph data structure, and I found a great resource: this website gave a great demonstration of a graph class in C++. I pretty much copied the code exactly line-by-line, and it improved my understanding. I'll have to keep wrestling with this concept.

1/21/2023

Researching the Graph Data Structure

I didn't get much of a chance today to write code, but I did read up on the graph data structure more. I had to stop myself from feeling discouraged: I was feeling the strain of learning a new concept, and I was tempted to criticize myself for feeling that way. I had to remind myself that learning is always hard, and that I was probably feeling tired from working my regular job.

1/22/2023

Graph Data Structure in C++: adjacency_list1.cpp

I did it! I implemented my own graph class in C++! The example I found on techiedelight was indispensible, but when I implemented my own, I tried to do so by thinking through the logic and syntax myself. Moreover, I wrote the constructor such that the user initializes the graph at runtime. After reading on geeksforgeeks, I was able to understand the graph data structure much better. Also, my mind is much sharper today.

I decided to start including practice programs in this daily log repo. That way I can keep a much clearer record of my progress. Hence, I've added the link to the header of this entry.

GIT

It's settled. I need to become more proficient with git, and that probably entails taking a short class, or watching in-depth tutorial videos. Let me try to outline the problem I faced today:

- Need to clone this repository (programming_log) onto my desktop computer, but I keep getting this error:

Support for password authentication was removed on August 13, 2021. - Try using GCM (git-credential-manager), which I believe I used successfully on my laptop. For some reason, I can't get it working. Even though GCM is configured, running git clone on HTTPS still throws the original error, like gcm is failing to run. Because I'm too fed up to troubleshoot further, I look for other options.

- Try using SSH (Secure Shell Protocol). Following this tutorial, What is a Git SSH Key?, I'm able to create a key-pair on my local machine stored in .ssh in my user directory. The final command,

ssh-add -K /Users/you/.ssh/id_rsa, results in a request for an authenticator pin, which I don't have set up with GitHub, so I ask stackoverflow for help. Turns out I just need to omit-Kfrom the command. - Go to profile, settings, and SSH and GPG Keys. I add new SSH key, but try as I might, I can't seem to get the correct format for the key even though I'm copy-pasting it from .ssh

Key is invalid. You must supply a key in openssh public key format. Turns out I'm using the private key, and I need to use the public key (.pub). After troubleshooting further formatting errors, I succeed. - Create new directory, initialize it as a local repo, and run

git remote add programming_log git@github.com:seandavidreed/programming_log.git. I get this warning:The authenticity of host 'github.com (192.30.252.128)' can't be established. With further digging, I arrive at GitHub Docs from which I learn I can add GitHub public key fingerprints to a file I have to create (known_hosts) in~/home/seandavidreed/.ssh. This resolved the warning. - Run

git fetch, which works, and thengit merge, which doesn't. I getfatal: no remote for the current branch. I find out that since I just initialized this local repo, it doesn't have a real branch,fatal: You are on a branch yet to be born, and it won't until I commit something. I realize I'm doing this all wrong. - Realize that I don't need to create and initialize a local repo; I can just run

git clone git@github.com:seandavidreed/programming_log.git, which is the clone command with SSH. It works and I now have the remote repo on my desktop, from which I am updating this programming_log.

Oh the suffering. Oh the naivete.

Graph Data Structure Again! adjacency_matrix1.cpp

I decided to try my hand at the adjacency matrix representation of a graph. I can see why the adjacency list is more popular. While the matrix is conceptually easier to understand and implement, the list has a significantly reduced space complexity. I could feel the weight of all the space being used as I wrote the program! I suspect I could optimize that space complexity when I refactor.

1/23/2023

Research

I didn't get to do my usual study today, but I did create a new Reddit account with the aim of joining several programming subs, where I can hopefully learn more about building my own projects from the community.

I also created an account with OpenAI playground so I could use chatGPT. I was able to test it out, submitting queries such as "write a graph data structure in C++" and a question related to the beauty industry (that Rachel formulated) that only a professional would know. The AI answered both queries expertly. Even though I know that chatGPT is really only recycling and rehashing content created by humans and shared on the internet, the fact that it does it so well makes me worry for the future of disciplines like programming. I Know my fears are somewhat irrational, but I can't help it!

1/24/2023

Bare Metal C

I read through Chapter six entirely this afternoon. It was on Arrays, Pointers, and Strings, all things I'm well familiar with. Nonetheless, I did have a few takeaways:

- "The size of the pointer depends on the processor type, not the type of data being pointed to. On our STM32 processor, we have 32-bit addresses, so the pointer will be a 32-bit value.

- When initializing a pointer, we specify the data type to which it points (uint32_t *ptr). This does not do anything to the size of the pointer itself, but rather it indicates how the pointer is to be incremented in the case of pointer arithmetic, i.e. (ptr + 1) will move the pointer over by four bytes since a uint32_t is four bytes in size. It will go from 0xFFE0 to 0xFFE4, for example.

I solved programming problems 1 through 3. Number 3 proved to be a most interesting challenge: "Write a program to scan an array for duplicate numbers that may occur anywhere in the array." The first solution that came to mind was brute-force, touching every element and testing it against every other element for a time complexity of O(n^2). For any sufficiently large array, this method is absurd. I thought of other solutions as well, but ultimately I realized that the best thing to do would be to sort the array beforehand. Naturally, I turned to merge sort. Loosely referencing an old implementation of mine, I wrote another mergesort algo, and in the process, I found that with a few tweaks the mergesort algorithm itself could count the number of duplicates while sorting the array. Here's what I wrote:

/*

* duplicates2.c

*

* Created on: Jan 24, 2023

* Author: seandavidreed

*/

#include <stdio.h>

#include <stdlib.h>

#include <stdint.h>

#include <math.h>

#include <time.h>

#define TYPE uint16_t

// ARRAY_SIZE cannot be a value larger than can be held

// by the fixed-width data type above

#define ARRAY_SIZE 1000

void initRandomArray(int *arr, int size) {

srand(time(NULL));

for (TYPE i = 0; i < size; ++i) {

arr[i] = rand() % (int)pow(2.0, sizeof(TYPE) * 8.0);

}

}

void mergeSort(int *arr, int *temp, int i, int j, int *count) {

// base case

if (i >= j) return;

// recursive case

int mid = (i + j) / 2;

mergeSort(arr, temp, i, mid, count);

mergeSort(arr, temp, mid + 1, j, count);

int lptr = i;

int rptr = mid + 1;

int k;

for (k = i; k <= j; ++k) {

if (lptr == mid + 1) temp[k] = arr[rptr++];

else if (rptr == j + 1) temp[k] = arr[lptr++];

else if (arr[lptr] == arr[rptr]) {

temp[k] = arr[lptr++];

(*count)++;

}

else if (arr[lptr] < arr[rptr]) temp[k] = arr[lptr++];

else temp[k] = arr[rptr++];

}

for (k = i; k <= j; ++k) {

arr[k] = temp[k];

}

}

void printArray(int *arr, int size) {

for (TYPE i = 0; i < size; ++i) {

printf("%d ", arr[i]);

}

printf("\n");

}

int main() {

int array[ARRAY_SIZE], temp[ARRAY_SIZE];

int count = 0;

initRandomArray(array, ARRAY_SIZE);

printArray(array, ARRAY_SIZE);

mergeSort(array, temp, 0, ARRAY_SIZE - 1, &count);

printArray(array, ARRAY_SIZE);

printf("Number of Duplicates: %d\n", count);

return 0;

}

1/25/2023

Bare Metal C

Finished the last two problems in chapter 6, numbers 4 and 5. They were pretty easy. However, I ran into a snag on problem 5; I was trying to pass a char pointer as an argument to my void capitalize(char *str) function, which capitalizes the first character of every word beginning with a letter. The problem is when a string is declared like this char *str = "This is a string.";, the string is not mutable. I needed to instead declare it like so, char str[] = "This is a string.";. Though I've looked into this topic many times, I still find the terminology somewhat abstruse. However, the concept is intact. Here's a resource on the subject: Character Strings in C.

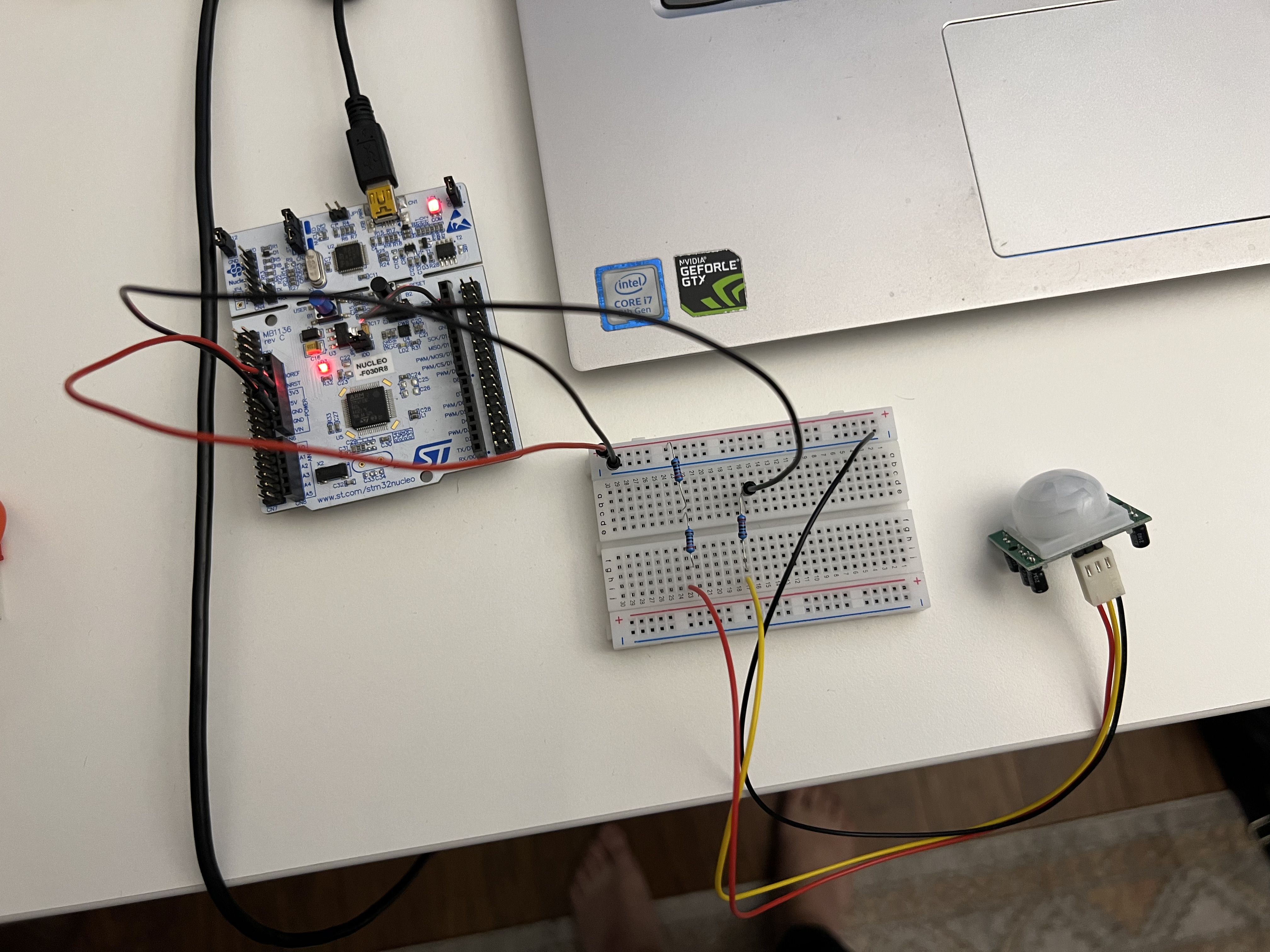

Hardware

Woohoo! I successfully test my PIR motion sensor from adafruit. It’s a small feat, but I’m proud of it nonetheless. Here’s a picture: picture. I sorted out the configuration by consulting this documentation

C++20 Masterclass

Made my cpp20-masterclass projects folder into a git repository. Now I can work on these projects on my Linux desktop as well! I had to make some adjustments in the .json files, but it was relatively simple. I noticed that if I don’t have the C/C++ Microsoft extension installed, then VSCode won’t recognize the the key-value “type”: “cppbuild” in tasks.json.

1/26/2023

C++20 Masterclass

Completed 72 - 74. All on bitwise operators. The demonstration of data loss via shifting bits left or right was most helpful.

1/27/2023

C++20 Masterclass

Completed 75 and 76. It was mostly review, but I appreciated the way the information was presented. We were able to clearly demonstrate the behavior of the bitwise logical operators.

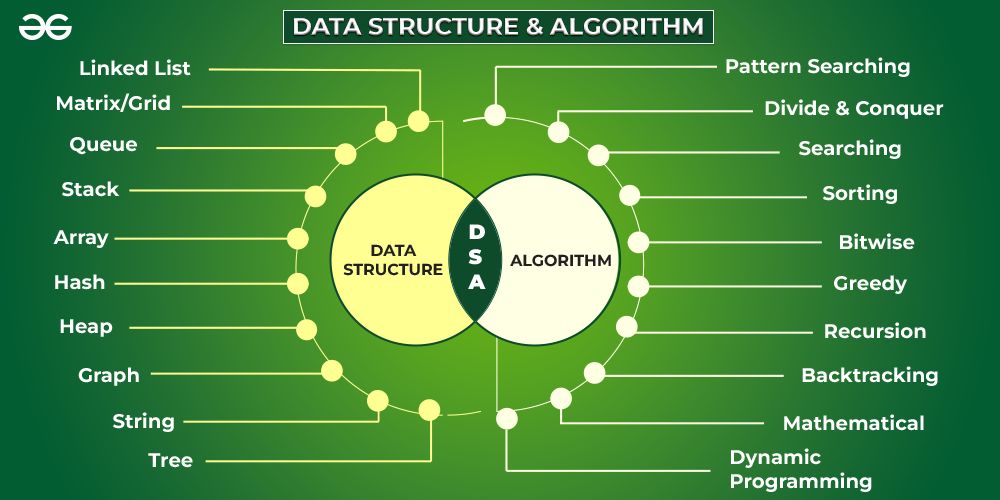

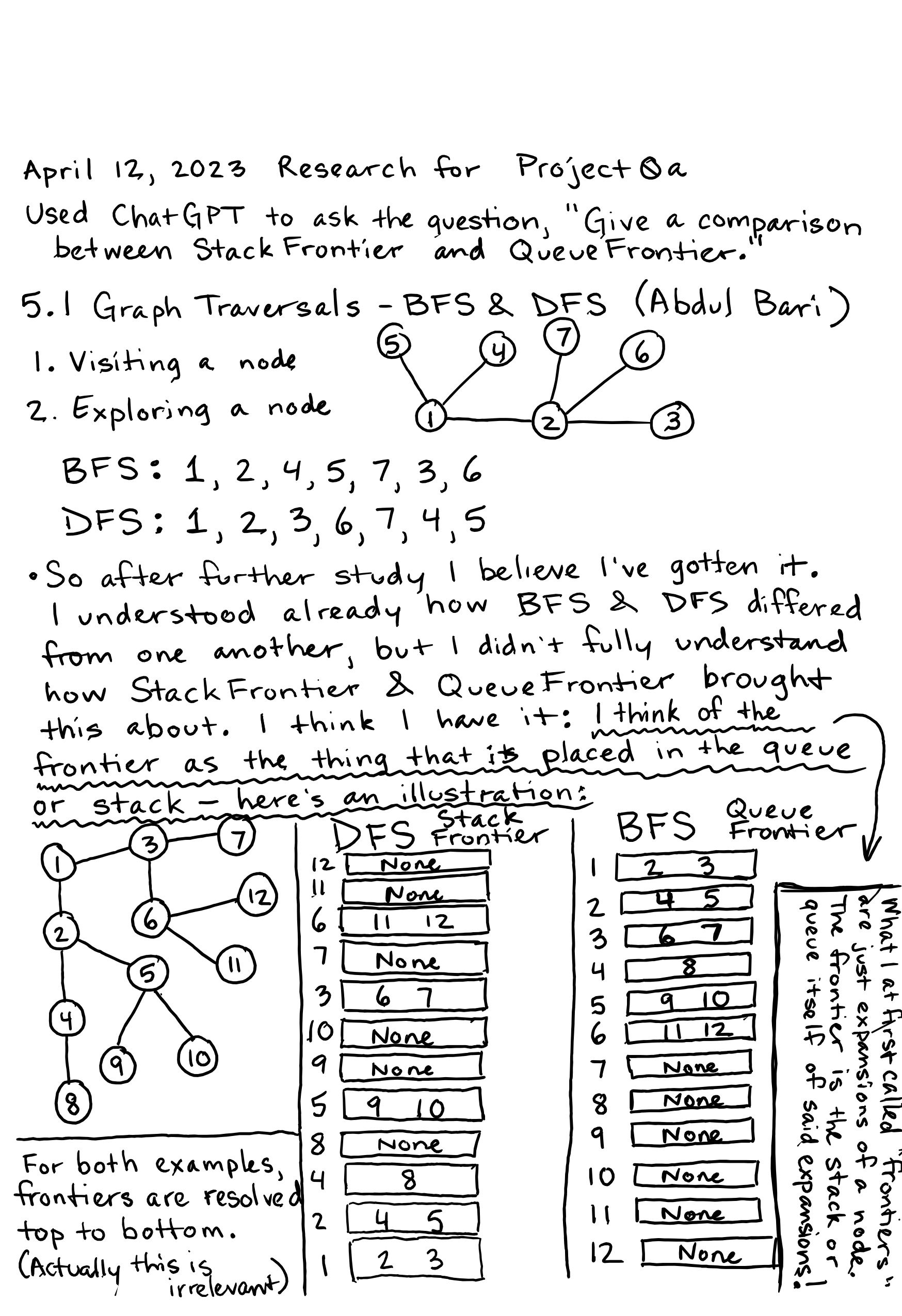

Data Structures and Algorithms

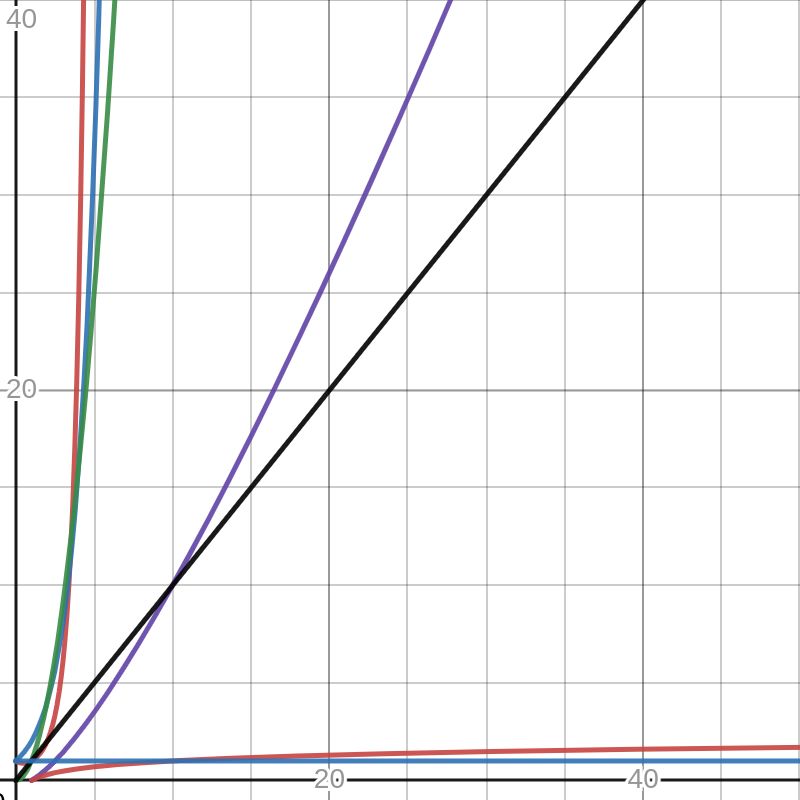

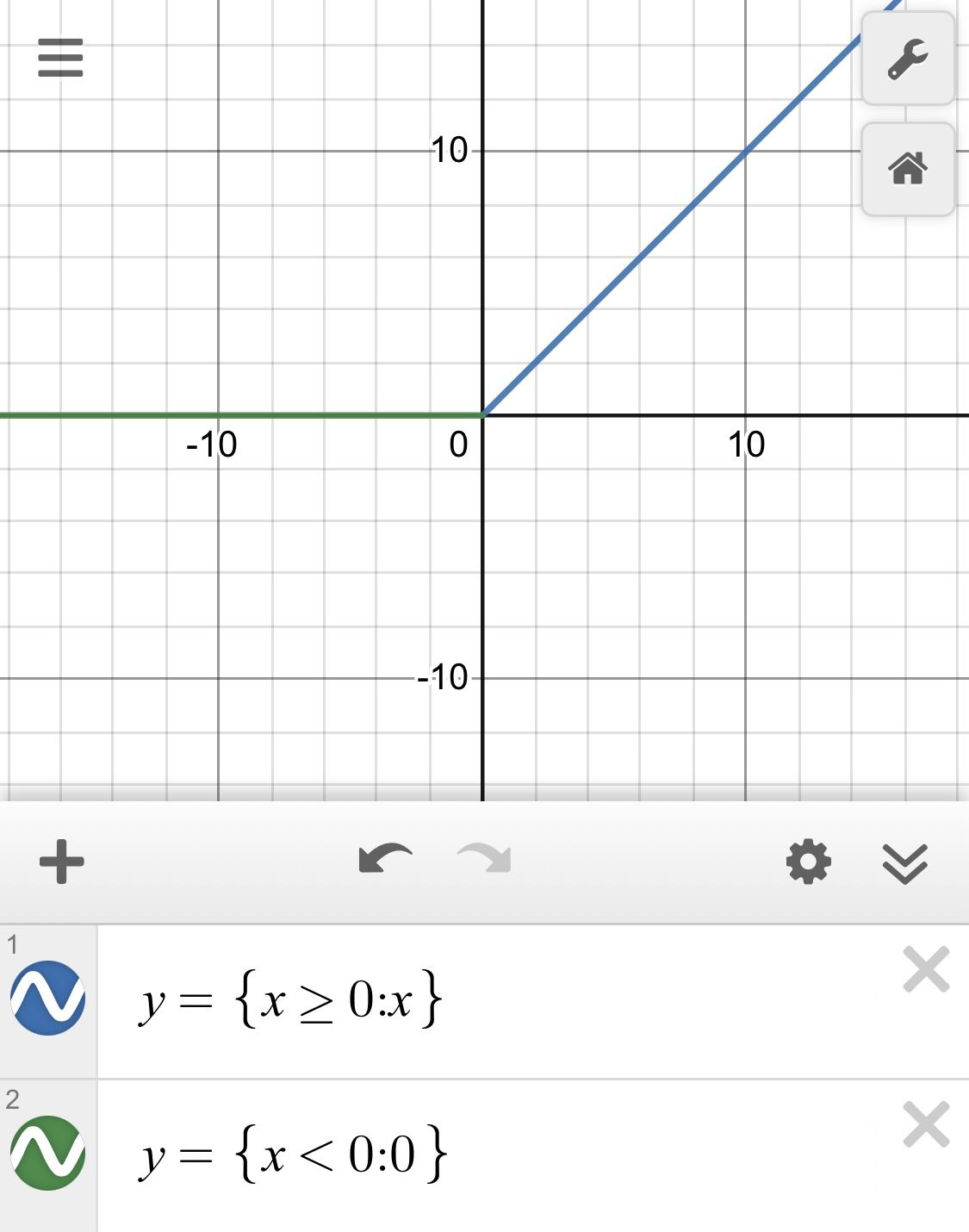

I need to study DSA alongside my C++ study; that way I have practice problems to tackle with the language. I'm consulting an article on geeksforgeeks to get a better understanding of DSA. After the overview of Data Structures and Algos, we get into time and space complexity. I decided to plot each complexity function on a graph on desmos. Below is the image I created:

In order from worst to best: O(n!), O(2^n), O(n^2), O(nlogn), O(n), O(logn), O(1).

I also consulted a list of examples for each complexity on stackoverflow.

I tried in vain today to create a circular linked list. I had the right ideas in mind, but my brain was simply too foggy and weary to deliver. Oh well. I'll try again tomorrow.

1/28/2023

Data Structures in C++ Circular Linked List

I successfully implemented the circular linked list! It was actually rather a challenge to wrap my head around it, but in the end I was able to reason it out. I intend to keep studying various data structures and algorithms using C++ and roughly following the image above. I'm reacquainting myself with all things linked lists, and then I'll move on the the matrix and see if there's anything I'm missing there. Moreover, I'd like to see some real life applications for these data structures. For instance, I want to see a tangible use of the circular linked list. Perhaps tomorrow I will search for one.

1/29/2023

Data Structures in C++ Circular Linked List

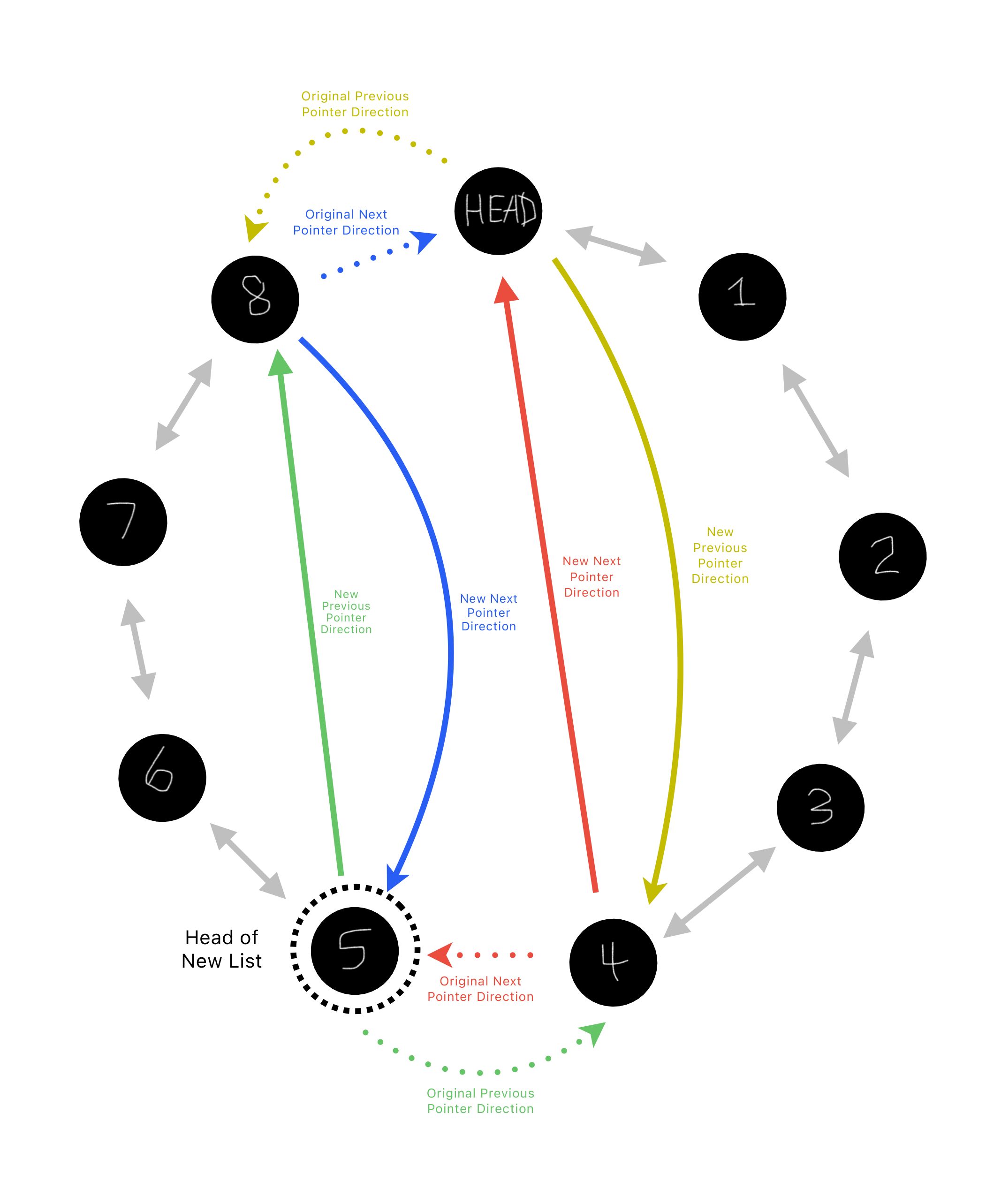

After yesterday's successful implementation of the circular linked list, I went on geeksforgeeks and browsed the practice problems. I found this problem, which enjoined me to write a funtion that splits a circular linked list into two circular linked lists. The problem is listed as medium difficulty, but I made it even harder on myself: I could have written a function that merely copies each half of the linked lists into two new linked lists, but I didn't want to increase time and space complexity that much. I wanted to find a way to manipulate the pointers in the existing linked list and create two lists by simply redirecting the node pointers diametrically-opposed to the head pointer, one to the head itself, and the other to become a new head that then points back to head->previous. Observe the graphic:

Moreover, I wanted to create this split function, which I called void mitosis(CircleLink& newList), as a method inside the CircleLink class. It all works! I had to suffer to get it to work, and I found that my main problem was in reassigning the pointers; I had a hard time keeping it straight which one was pointing where as I redirected them until I created more pointers to hold onto important addresses. Therefore, I succeeded in my initial design, but it may not be worth the amount of extra space I needed for each pointer. I'll be happy with it for now.

1/31/2023

Data Structures in C++

Deletion in a Circular Linked List

Yesterday after a busy day outside, I wrote a delete node function, and it wasn't working. For the life of me I couldn't figure it out: I thought it was another issue with reassigning the pointer addresses, but this morning I opened up the code and the error stared me in the face:

void deleteNode(unsigned value) {

Node *ptr = head;

for (int i = 0; i < numNodes; ++i) {

if (ptr->value = value) {

ptr->previous->next = ptr->next;

ptr->next->previous = ptr->previous;

delete ptr;

--numNodes;

return;

}

ptr = ptr->next;

}

}

I clumsily used an assignment operator instead of an equality operator in the if condition. That was all that was wrong!

I started working on a C++ program to sort a matrix, and I found myself perplexed at some of the differences between pointers and arrays. I've learned this stuff before, but it's likely there's a finer-grain understanding I've yet to achieve; I read this article, which helped to clarify some of the distinctions between them. The following is a quote from the article:

A common misconception is that an array and a pointer are completely interchangeable. An array name is not a pointer. Although an array name can be treated as a pointer at times, and array notation can be used with pointers, they are distinct and cannot always be used in place of each other. Understanding this difference will help you avoid incorrect use of these notations. For example, although the name of an array used by itself will return the array’s address, we cannot use the name by itself as the target of an assignment.

Reading this article brought to mind an old question I've had, namely "what does it mean that an array 'decays' into a pointer when it is passed to a function?" After searching around on the internet, I got a good grasp of the concept of array decay. The quote above holds the key: a pointer and an array name are not identical. The array name, like the pointer, yields the address of the first element, but it also contains with it the sizeof the whole array, which can be accessed by the sizeof() operator. Contrarily, if the sizeof() operator is run on a pointer, the size of the pointer will be returned. When an array is passed to a function, a pointer to the address held in the array name is passed instead. This can be circumvented by passing the array by reference. Observe the following examples:

// This first example will result in pointer decay

// since the array is passed by value.

#include <iostream>

unsigned long foo(int arr[]) {

return sizeof(arr);

}

int main() {

int arr[10] = {};

std::cout << sizeof(arr) << std::endl; // prints 40

std::cout << foo(arr) << std::endl; // prints 8 (on 64-bit machine)

return 0;

}

// This example won't result in pointer decay

// since the array is passed by reference.

// This can also be accomplished with

// unsigned long foo(int (*arr)[10])

#include <iostream>

unsigned long foo(int (&arr)[10]) {

return sizeof(arr);

}

int main() {

int arr[10] = {};

std::cout << sizeof(arr) << std::endl; // prints 40

std::cout << foo(arr) << std::endl; // prints 40

return 0;

}

2/1/2023

Data Structures in C++ Sort A Matrix

Today I completed the sort algorithm for a matrix. I implemented Selection Sort, which has time complexities of O(n^2) and $\Omega$(n^2). The initial challenge I faced was how to iterate through the matrix in a way that worked for selection sort; I needed to maintain two sub-arrays inside a matrix. The solution was to treat the matrix as an ordinary one-dimensional array. By including the printAddresses() function in my class Matrix, I was able to see how the arrays were arranged in memory. The addresses in a given row were of course spaced by 4 bytes, the typical width of an int. However, between one row and another, there was a buffer of 16 bytes. My naive hope that they would somehow be contiguous was dashed, so I decided to iterate through the matrix traditionally, with i and j. However, I made the inner loop like this:

while (i < _rows) {

if (matrix[i][j] < matrix[iSmall][jSmall]) {

iSmall = i;

jSmall = j;

changes = 1;

}

++j;

if (j == _cols) {

++i;

j = 0;

}

}

The bit at the end below ++j allowed me to iterate through each row as if they were contiguous. Conceptualizing the matrix as an ordinary array was the key.

The first iteration I got working included the swap operations in the inner while loop. This meant that worst-case scenario, the number of swaps performed would be O(n^2). However, when I looked it up, the internet kept saying that there should be at worst O(n) swaps. After a quick look on stackoverflow, I learned that I needed to put the swap operations in the outer while loop below the inner loop. In the inner loop, I then needed to keep track of the indices of the current smallest element, not the element itself.

For fun, I analyzed the difference in runtime between these two implementations:

O(n^2) swaps vs O(n) swaps

.0126s .0115s

The matrix I used to get these average figures was rather small, hence the one millisecond difference. If the dataset were much larger, the runtime difference would likely be much more noticeable. I had fun with this problem!

C++20 Masterclass

Completed lecture 77 on bitmasks. This is the very same content I've been reviewing in Bare Metal C, which I'd like to get back to soon. I'm eager for the time when I can build confidently with C++.

2/2/2023

C++20 Masterclass

Completed lectures 78 and 79, which concludes Section 12 on Bitwise Operators. I especially liked the example in 79, where we packed RGB values into one 4-byte unsigned int type. With this method, there is one unused byte, but this is still far more efficient than using 12 bytes (in the case of 3 ints) for values that span 0 to 255.

Data Structures in C++ Stack

Running down the list of Data Structures on geeksforgeeks, I implemented a Stack class in C++. Compared to other data structures, this one is fairly easy to build.

STM32cubeIDE

I downloaded this IDE today with the hopes of using it to program the Raspberry Pico and the Nucleo F030r8 development board. I'm really eager to get a true embedded project off the ground!

2/3/2023

C++20 Masterclass

Completed lectures 81 through 84. There was not much new here, so I watched the videos at increased speed. I didn’t have time for much else today!

2/4/2023

Bare Metal C

I completed chapter 7, which was all about Local Variables and Procedures. The bits on local variables helped sharpen my understanding of static allocation, which occurs at compile time and is hard-coded into a programs binaries before it even runs. That is much clearer now. Using the GDB debugger in STM32 Workbench, we were able to observe function calls being added to the stack. This was especially interesting in the case of the recursive factorial function: I witnessed the stack grow to the necessary size to initiate the functions base case, and then shrink as each stack frame resolved and was destroyed. Tomorrow, I plan to do the practice problems.

2/5/2023

Bare Metal C

I Solved practice problem 3 in chapter 7. It was the classic recursive fibonacci function problem. I remember this being difficult to write the first time I tried it early last year, but now it was extremely easy; I just needed to refresh myself on the fibonacci sequence formula.

#include <stdio.h>

#include <stdlib.h>

unsigned int fibonacci (unsigned const int n) {

// Base Case

if (n == 1) return 0;

else if (n == 2) return 1;

// Recursive Case

return fibonacci(n - 1) + fibonacci(n - 2);

}

int main() {

printf("%u\n", fibonacci(5));

return 0;

}

Exploring the Nucleo Board and STM32 Cube IDE On My Own!

My goal is to connect the PIR (Pyroelectric InfraRed) Motion Sensor I got from Adafruit to my Nucleo F030R8 development board.

-

First, I wanted to check the power pins on the development board to see if I needed to initialize them. I connected test leads to the 3V3 and GND pins and used my multimeter to read the voltage, which indeed was 3.3 volts.

-

Next, I consulted the Bare Metal C book, from which I refreshed myself on LED initialization:

LED2_GPIO_CLK_ENABLE(); GPIO_InitTypeDef GPIO_InitStruct; GPIO_InitStruct.Pin = LED2_PIN; GPIO_InitStruct.Mode = GPIO_MODE_OUTPUT_PP; GPIO_InitStruct.Pull = GPIO_PULLUP; GPIO_InitStruct.Speed = GPIO_SPEED_FREQ_HIGH; HAL_GPIO_Init(LED2_GPIO_PORT, &GPIO_LedInit);I placed this code inside the comments:

/* USER CODE BEGIN Init */ /* USER CODE END Init */found inside the main.c source file.

-

I needed to understand the

LED2_GPIO_CLK_ENABLE();macro so I could ostensibly apply the same thing to my motion sensor peripheral. I highlighted the statement, and selected "search text" and "workspace". The search did not reveal anything I needed. -

Explored the

stm32f0xx_hal_gpio.hlibrary to find the preprocessor definitions for the GPIO pins. I'm usingGPIO_PIN_2. -

Explored the

stm32f0xx_hal_conf.hlibrary and found an include forstm32f0xx_hal_rcc.h. I found that "RCC" stands for Reset Clock Control." I thought maybe I could find out what the correctCLK_ENABLE()macro would be for my motion sensor - to no avail. I decided to guess that it would be calledPIN_2_GPIO_CLK_ENABLE();. -

When I tried to build the project, I found that

LED2_GPIO_CLK_ENABLE(); PIN2_GPIO_CLK_ENABLE(); LED2_PIN; LED2_GPIO_PORT; PIN2_GPIO_PORT;were all flagged as implicit declarations. This means that there is a library that STM32 System Workbench includes by default and that STM32 Cube IDE does not. My next step is to retry everything back in System Workbench. I really just want to wrap my head around all this!

-

Found the solution! I copied all my code over to System Workbench and right away I noticed a library that was not included over at Cube IDE,

stm32f0xx_nucleo.h. Opening this library, I found several aliases; for example.GPIO_PIN_5is aliased toLED2_PIN, andGPIOAis aliased toLED2_GPIO_PORT.__HAL_RCC_GPIOA_CLK_ENABLE()is aliased toLED2_GPIO_CLK_ENABLE(). As I looked through the different macros, I came across these ones:#define NUCLEO_SPIx_SCK_GPIO_PORT GPIOA #define NUCLEO_SPIx_SCK_PIN GPIO_PIN_5 #define NUCLEO_SPIx_SCK_GPIO_CLK_ENABLE() __HAL_RCC_GPIOA_CLK_ENABLE()Looking at the development board, I saw that pin 13 was also named

SCK. I divined that I could connect my PIR Motion Sensor to this pin and use the macros above, and it worked! Here's a picture:

2/6/2023

Computing Technology Series on YouTube

This series is all about the evolution of the CPU from the Intel 4004 onward. I'm taking notes in a separate file for this one since I expect to write a lot, and I don't want to clutter up this log! Here's the link.

Raspberry Pi Pico

Last week I found a video that would show me how to start using the Raspberry Pi Pico with C/C++. Today, I followed along with the video and got everything working nicely. I can tell this microcontroller will be much easier to work with than the Nucleo F030R8. Therefore, I'll use it to learn some more beginner embedded techniques. I still would much like to get good at using the Nucleo board too. Anyhow, this video showed me how to write and run an led-blinking program on the pico. It also covered how to use a core for each led blinking operation; I thought that was pretty fascinating and I'm keen on learning more about it.

2/7/2023

Raspberry Pi Pico

I decided to set up the C/C++ sdk for the Pico on my desktop computer as well. This time I followed the official documentation. I left off at Chapter 4 - I intend to work my way through the whole document.

Bare Metal C

I'm in Chapter 8, which is on Complex Data Types (I can see that this chapter plus the next two will be quite important and will likely present new concepts). I read through the first part of this chapter regarding enums and a neat preprocessor trick that can be done with them. Here it is below for reference (I copied this from the book):

#define COLOR_LIST \

DEFINE_ITEM(COLOR_RED), \

DEFINE_ITEM(COLOR_BLUE), \

DEFINE_ITEM(COLOR_GREEN)

#define DEFINE_ITEM(X) X

enum colorType {

COLOR_LIST

};

#undef DEFINE_ITEM

#define DEFINE_ITEM(X) #X

static const char* colorNames[] = {

COLOR_LIST

};

#undef DEFINE_ITEM

After thinking through this process for a moment, it clicked, and I could see how useful it will be. I'll be working my way slowly through this chapter to absorb as much as I can.

2/8/2023

Bare Metal C

Chapter 8 new-to-me concepts in my own words:

-

The Enum data type is new to me, as outlined above.

-

The system architecture defines, among other things, the size of data that is to be passed along the address and data buses. In a 32-bit system, we essentially have a 32-lane superhighway for the data bus and a 32-lane superhighway for the address bus. Therefore, if we have a struct in memory that is 6 bytes in size, when the CPU performs a

fetchfrom RAM, it will first fetch 4 bytes (32 bits) of the struct via the data bus, and then it will fetch the remaining 2 bytes along with 2 bytes of padding (32 bits in total) - this padding is defined by the compiler, which prepares the struct according to the system architecture. -

When a structure is initialized, we can declare a struct name or a variable or both. If a struct is to be used only once, it makes sense to declare it with a variable and without a name:

struct { int dosage; int drug; } myPrescription;I won't have to declare this struct in main since it is declared here. We can declare both a name and a variable:

struct prescription { int dosage; int drug; } myPrescription; -

In the unions section, the concept of Endianness is touched on (without being named). It is another facet of the system architecture and it defines how a

wordis stored, either from lowest-order to highest-order byte, as in little Endian, or vice versa, as in big Endian. Little Endian is the most common since it allows a givenwordto be read the same regardless of the number of bits read:0x13 will be stored 1300 0000 0000 0000 in a little endian, 64-bit machine. If the same value were read as 32-bit 1300 0000, it will still read 0x13.

The term Endianness is derived from Gulliver's Travels, in which there are two factions of Lilliputians, one which cracks hard-boiled eggs from the big end, and the other which cracks them from the little end.

I left off at Creating A Custom Type, which I will pick up perhaps tomorrow!

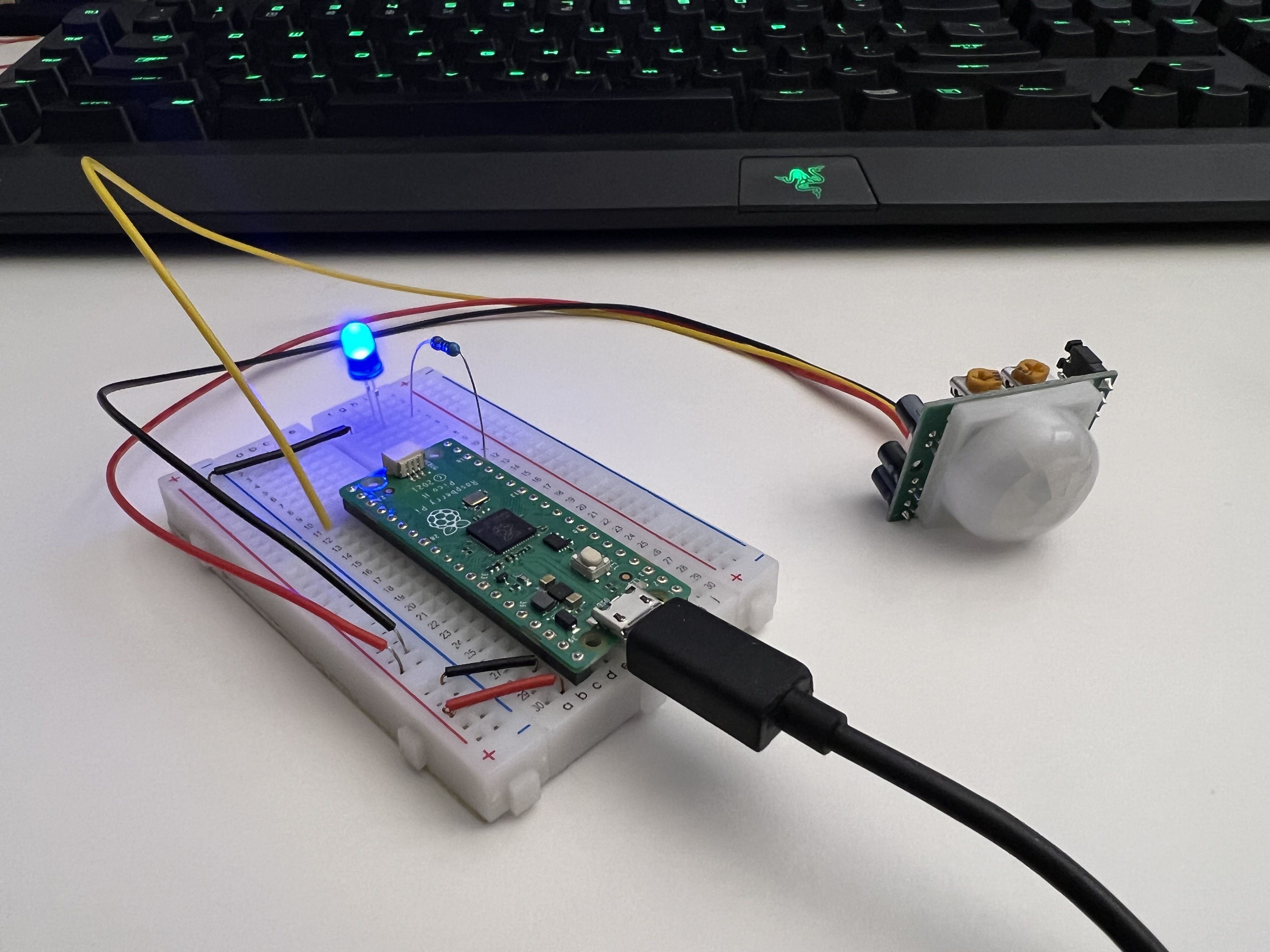

Raspberry Pi Pico

I decided to test out my PIR Motion Sensor with the pico, and writing the code proved to be much simpler than that of the Nucleo board. That doesn't surprise me though since I believe the Pico is designed to hold your hand a little more than the Nucleo F030R8. I got everything wired up on the breadboard, but then I noticed the sensor seemed too sensitive: it would trigger after a movement, but then it would keep triggering for an extended period of time without any movement. I proved this by walked out of the room and observing the LED via a mirror - it still flashed. I tried many things to troubleshoot; I thought maybe the signal was too strong so I added some resistors, but I realize this is a naive notion. After many hopeless attempts, I found the answer online; a reply on stackexchange suggested that Vcc should not be 3v3 but at least 5v. I had the sensor drawing power from 3v3, which evidently causes retriggering. When I changed the the power to 5v, the sensor began working perfectly!

Here's the code I wrote - I took the opportunity to practice with enums!

#include <stdio.h>

#include "pico/stdlib.h"

#include "hardware/gpio.h"

#include "pico/binary_info.h"

enum GPIO_IN_USE {

PIR_LED = 14,

PIR_SENSOR = 16

};

int main() {

bi_decl(bi_program_description("This is a test binary"));

bi_decl(bi_1pin_with_name(PIR_LED, "Peripheral LED"));

stdio_init_all();

gpio_init(PIR_LED);

gpio_init(PIR_SENSOR);

gpio_set_dir(PIR_LED, 1);

gpio_set_dir(PIR_SENSOR, 0);

while (1) {

if (gpio_get(PIR_SENSOR)) {

gpio_put(PIR_LED, 1);

sleep_ms(200);

gpio_put(PIR_LED, 0);

sleep_ms(200);

gpio_put(PIR_LED, 1);

sleep_ms(200);

gpio_put(PIR_LED, 0);

}

}

}

2/9/2023

Bare Metal C

I studied the design pattern found in the Creating a Custom Type section and I think it's pretty cool. I went ahead and replicated the program, adding my own touches, to fully understand the logic behind it. Here is the final product. I like the clever use of a union within a structure, which allows a structure to have a dynamic member. The addition of the enum is a nice touch too as it makes the code much more readable. It also allows the drawShape() function to recognize which shape is being passed in by checking the enum shapeType type member.

I wanted to practice with enums, but I found myself practicing with function pointers instead. I want to get a better grasp on their usage. Here's my silly little programs: func_ptr.c.

2/10/2023

Algorithms in C++ merge_sort

I wanted to practice with function pointers today, so I thought I'd try to implement a callback function for a sorting algorithm, the way Quick Sort is often implemented. I chose to build a Merge Sort Algo since it's kinda my pet algo at this point. I have studied it enough that I can now code it simply by thinking through the logic! It's a great feeling. I wrote a merge sort algo that works great, but I found myself unable to add a function pointer into the mix. I still don't understand their import enough to add them into my thought process. Oh well, I guess I got more practice with merge sort today.

I'm so glad I got back into Reddit again. I'm finding countless helpful tips on the r/embedded sub. For instance, today I found the Fastbit series on MCUs, which are available on Udemy, by reading this post. I ended up purchasing the class for cheap and I intend to start it once I complete Bare Metal C! Things to look forward to.

2/11/2023

C++20 Masterclass

I watched lectures 85 through 89 today. The majority were simply review, e.g. else and switch statements, ternary operator, and using integral types as booleans. There was a topic that was rather helpful: Short Circuit Evaluations. When a compound conditional statement with logical operators of one type is evaluated, only the information that is necessary to establish the condition is read. For example:

// The program will stop checking the conditions after the "false"

if (true && false && true && true) {

// Some code

}

The presence of that single false renders the whole statement false regardless of the following conditions, so the program disregards them. This is an important process to know because we can take advantage of it. We can write compound conditional statements with the most decisive conditions first. If we are writing a statement like the one above, we should put the condition most likely to be false first.

Algorithms in C++ mergeSort1000

I worked on my merge sort algorithm; I wanted to find a way to eliminate the need for an auxilliary array mergeSort(const unsigned int i, const unsigned int j, int arr[], int aux[]). Initially, I thought that an auxilliary array was being automatically allocated for every function call - such an operation would be extremely bloated, requiring something like nlog(n) number of arrays. This is not the case however. The initial array passed to the function decays into a pointer which is then passed again and again to the function recursively, so it seems there would be nlog(n) number of automatically allocated pointers instead. By removing int aux[] from the function parameters and replacing it with static int aux[1000] inside the mergeSort function, I simplified the use of the function, but I limited the size of array it can accomodate. A statically allocated array cannot have a variable size since it is baked into the program binaries. The statically-allocated auxilliary array is only defined once, at compile time, so even though the mergesort function behaves recursively, the auxilliary array will not. It's unclear whether I optimized the algorithm with this change, but I believe I did make it prettier, if that's worth anything!

2/13/2023

Watching Videos about AI

Following a brief conversation with Rachel's mom in which she mentioned a Google engineer that claims LaMDA is sentient, I watched the following videos:

- Google Engineer on His Sentient AI Claim

- No, it’s not sentient - Computerphile

- But How Does ChatGPT Actually Work?

Video three deviates from the topics of one and two a little bit; the first two regard LaMDA by Google, not chatGPT. What did I learn from these? First, the Google engineer is not claiming that the AI is sentient—he makes it clear that sentience is I’ll-defined anyways. As someone said in the comments, the attention-grabbing headline has likely more to do with the news outlets tactics than the engineer’s actual perspective. Second, I learned a little about transformer architecture, emphasis on little. I’m very intrigued by the developments in AI, but I know I need to keep on my current track toward embedded systems. Nevertheless, I’ll continue to gain surface knowledge on AI until such time that I can learn more deeply.

Bare Metal C

I found the Structures and Embedded Programming section informative. It mentioned the Small Computer System Interface (SCSI), which is a standardized way of transferring data to and from devices (Est. 1986). The standard uses a command block, which was only 6 bytes at first but is now 32, to specify the address and size of the data and the opcode of the operation to be performed on it. Following the read10 standard, we made a command block using a struct:

#include <stdio.h>

#include <stdint.h>

#include <assert.h>

struct read10 {

uint8_t opCode; // Op code for read

uint8_t flags; // Flag bits

uint32_t lba; // Logical block address

uint8_t group; // Command group

uint16_t transferLength; // Length of the data to read

uint8_t control; // Control bits, the NACA being the only one defined

};

int main() {

printf(“%ul”, sizeof(struct read10));

assert(sizeof(struct read10) == 10);

return 0;

}

The printf statement yielded 16, and the assert statement terminated the program since 16 != 10. In the book’s example, the program yielded 12 bytes for the size of struct read10. In both cases, the compiler automatically pads the structure if any of the data are unaligned. For instance, the books example is from a 32-bit system, so the data in the struct will be read in that word size, which makes uint32_t lba unaligned since its address is not offset by 4 bytes from the address of uint8_t opCode. The compiler adds 2-bytes padding between ‘uint8_t flagsanduint32_t lba` and the data is now aligned to the word size.

In my own example, I’m working with 64-bit system architecture, so the compiler did this:

struct read10 {

uint8_t opCode;

/* 3 bytes padding added */

uint8_t flags;

/* 3 bytes padding added */

uint32_t lba;

uint8_t group;

uint16_t transferLength;

uint8_t control;

};

I have to say that, after looking through the assembly code, I was a little perplexed at the way the data was moved, but I think I got the gist of what went on in the code block above.

2/14/2023

Bare Metal C fraction.c

Wow, I had way too much fun with Problem 1 of Chapter 8, and I learned a ton. The problem is written as follows:

"Create a structure to hold a fraction. Then create procedures that add, subtract, multiply, and divide fractions. The fractions should be stored in normalized form. In other words, 2/4 should be stored as 1/2."

Designing the struct was easy enough. Things got interesting when I began working on the fraction add(fraction *addend1, fraction *addend2) function. The initial arithmetic on the numerators and denominators was fun to implement. I wanted the code to be syntactically sugary and tight; I'm happy with what I got. I quickly realized that the second step, reducing the fraction to its simplest form, would need to be repeated for each arithmetical function (add, subtract, multiply, divide), so I created a separate function, void reduce(function *reduce). I couldn't find a better way to factor out the fraction besides using nested loops. I take half of the smaller term and iterate from 2 up to that number checking which divisors will leave no remainders. As long as a given divisor keeps dividing evenly, the function remains in an inner while loop.

Everything worked just fine until I needed to work with negative values; finding the smaller term becomes problematic because a negative number is always smaller than a positive number. What I really needed was the number that is closest to zero - I needed the absolute value. I didn't want to use the math.h library function, abs(). I thought about using bitwise operations with bitmasks. This proved to be the right idea, but I couldn't get the right implementation. I wanted one bitmask to both remove the sign from negative numbers and leave positive numbers unaffected. I caved and searched for the answer. These were the two sources I found:

Compute the Integer Absolute Value Without Branching

Bit Twiddling Hacks

At first, I was so baffled at how these functions worked:

int v; // we want to find the absolute value of v

unsigned int r; // the result goes here

int const mask = v >> sizeof(int) * CHAR_BIT - 1;

r = (v ^ mask) - mask;

I presumed the mask to be equal to 0x1 by the end of the operation, so I didn't see how this worked, yet it did. This page in the Right Shifts section cleared it up: when a right shift is performed on a negative integer, 1's are propagated to the right:

signed short int someValue = 0b1000000'00100000;

someValue >> 1;

// 0b11000000'00010000

someValue >> 4;

// 0b11111100'00000001

someValue >> 4;

// 0b11111111'11000000

This is reasonable since the right shift is supposed to reduce the magnitude of a value by half for each move. As more 1’s propagate, the negative value approaches 0. Apparently some processor architectures define a different behavior for right shifting negative integers, presumably causing the magnitude of the negative value to double with each right shift.

2/15/2023

Bare Metal C

- car_struct.c - Wrote a program for problem 2, and I added more functionality than was required for the sake of practice. The main goal here was to implement a car struct that contains a union for two structs of vehicle types, electric and gas. In addition to completing this, I implemented the enum preprocessor trick outlined on 2/6/2023. Additionally, I added a color member to the struct that stores RGB values in a

uint32_ttype. For this member, I wrote functions that read and write from the member with bitwise or and shift operations. I had a lot of fun doing it! - acmeTrafficSignal.c - Worked on this one for a good while. I couldn't understand why the problem wanted me to organize the program the way it did (I did take some liberties, like using function pointers and adding my own functions). In the end, I decided to roll with it. It was good practice for enums, unions, and function pointers.

2/16/2023

C++20 Masterclass

I completed lectures 90 through 92, and there was some material that was new to me:

if constexpr (condition) {}if constexpr allows us to evaluate an if condition at compile time provided the condition itself isconstorconstexpr. I can see this being very useful for situations where a user needs to be able to modify a constant value at the top of a program and the runtime needs to remain unaffected. The constant value might be tied to several conditional statements throughout a library or API, for instance, and if the calculations are heavy,if constexprwill make sure that the runtime is not affected by them.if (int someVar {10}; otherVar) {}This is also really handy. Now we can declare a variable only for the scope of a given if condition. This allows us to keep from cluttering up the namespace, which is especially important if the program is very large.switch int someVar {10}; otherVar) {}This works essentially the same way the if with initializer works.

2/17/2023

Bare Metal C

I started in on Chapter 9, Serial Output on the STM. Before reading the majority of this chapter, I didn't really have an idea of serial I/O. Now I undertstand that serial I/O in a general sense is the pushing of bits in series in what's called a stream. I imagine that the opposite of serial would be parallel, sort of like in electric circuits. When a CPU fetches data from RAM, I imagine the data could be said to be received via the data bus in parallel; there are multiple lanes in the superhighway delivering the data in multiple streams. Disclaimer: I might be making stuff up here. Really, I'm only trying to help myself conceptualize the process of Serial I/O. For serial communication with a microcontroller, we have to access RX (receiver) and TX (transmitter) pins. In the case of the NUCLEO board, the TX and RX pins are already wired up for us, so we don't need to connect any additional jumper cables.

I especially enjoyed reading the section A Brief History of Serial Communications, in which we are taken from the telegraph to the teletype to the computer. Through each advancement, serial communications technologies have remained relatively the same, except that they have increased in speed (baudrate, which is bits per second). In this section, there is a subsection, Line Endings, I found very interesting. Here's a direct quote:

--Begin Quote--

If you sent a character immediately after the carriage return, you'd get a blurred blob printed in the middle of the line as the printhead tried to print while moving.

The teletype people solved this issue by making the end of a line two characters. The first, the carriage return, moved the print head to position 1. The second, the line feed, moved the paper up one line. Since the line feed didn't print anything on the paper, the fact that is was done while the printhead was flying to the left didn't matter.

However, when computers came out, storage cost a lot of money (hundreds of dollars per byte), so storing two characters for an end of line was costly. The people who created Unix, the inspiration for Linux, decided to use the line feed (\n) character only. Apple decided to use the carriage return (\r) only, and Microsoft decided to use both the carriage return and the line feed (\r\n) for its line ending.

C automatically handles the different types of newlines in the system library, but only when you use the system library. If you are doing it yourself, ... you must write out the full end-of-line sequence (\r\n).

--End Quote--

More useful bits

- UART stands for Universal Asynchronous Receiver-Transmitter.

- USART stands for Universal Synchronous/Asynchronous Receiver-Transmitter.

- In synchronous serial communication, the transmitter continuously sends out characters even when idle to maintain the synchronization between the transmitter and receiver clocks.

- In asynchronous serial communication, the transmitter only sends characters when there is something to send. This form is used when it is reasonable to assume that the transmitter and receiver clocks can stay synchronized on their own.

2/18/2023

Bare Metal C

I finished up Chapter 9 today. At first I was a little displeased by the use of copy code to test out the USART, but my perspective changed when I got to the practice problems at the end of the chapter. These problems helped me to dig through the program and its included libraries and understand it better.

-

For problem 2, I was charged with "...changing the configuration so that you send 7 data bits and even parity (instead of 8 data bits, no parity)." The first step was to understand the function of the parity bit. Parity in mathematics is the even/odd quality of a number. In embedded programmin, a parity bit is used for error-checking. The error-checking method is established as either even parity or odd parity. In the case of even parity, the parity bit is set to 1 whenever there is an odd number of 1's in the 7 data bits and it is set to 0 when there is an even number. This ensures that when the data frame is transmitted, it will have an even number of 1 bits. If when it is received there is an odd number of bits, the receiving register will know that the data has been corrupted and it won't accept it. Odd parity works the same way but opposite.

- Here's what I did to solve this problem: I went into the

void uart2_Init(void)function and changeduartHandle.Init.Parity = UART_PARITY_NONEtouartHandle.Init.Parity = UART_PARITY_EVEN. I found the correct macro by right-clicking onUART_PARITY_NONEand selecting "Open Declaration". This change causedHello World!to look something like thisHello??orl?!. The space, 'W', and 'd' all have an odd number of 1 bits, so they were modified and thrown out of the range of printable ASCII characters.

- Here's what I did to solve this problem: I went into the

-

Problem 3 was about adding flow control to thr program to allow the user to start and stop the printing of

Hello World!. To solve this, I once again selectes some codeuartHandle.Init.HwFlwCtl = UART_HWCONTROL_NONEand selected "Open Declaration". There I found theUART_HWCONTROL_RTS_CTSmacro, which I deduced to be the value I needed. -

Problem 4 was certainly the most challenging, but with more browsing declarations and reading the documentation on character input, I was able to work out how to change the

myPutChar()function into a semi-functionalmyGetChar()function. The main takeaway from the documentation was how to use the RDR and RXNE variables.

2/19/2023

Today, I sat down to work on my programming, and I found myself feeling bored. I knew it was not the act of programming itself, but the lack of a project toward which I could direct my learning efforts. I’m glad to chip away at classes such as C++20 Masterclass, Bare Metal C, and eventually Mastering Microcontroller and Embedded Driver Development, but I need a personal project as well, like I had with studentlog and Inventory Manager.

I began brainstorming. How could I start a project that would have personal significance, practicability, and that would help solidify what I’m learning? Once again, synths and audio came to mind. After roaming the internet, I found this site, madewithwebassembly.com. After looking at some of the projects, I’ve decided to try to build a web application that can generate audio controlled by hand gestures! Since this will likely be a large project, I’ve created a separate markdown file to document the research and build process. Here it is: Gesture Midi Controller.

2/20/2023

Spent pretty much all of today working on my Gesture Midi Controller project. Though the project has yet to fully take shape, I'm getting myself prepared by learning about the SFML library and about how sound is represented digitally. Gesture Midi Controller

2/21/2023

Gesture Midi Controller Project

2/22/2023

Gesture Midi Controller Project

Once again, I spent all my time on this one. The benefits of doing this project are tremendous. I've learned so much more about C++ simply using it here (this should be obvious). Here's a few things of which I've gained a grasp: function pointers, constexpr, namespaces, short circuit evaluations (or something like it - this refers to the control flow manipulation I did with the if-else statements, which I describe in the project log), and of course, the SFML library. Of course, I'm not done exploring this library, nor building this project. I'm just getting started!

2/23/2023

Personal Website

After a hiatus, I finally got back to this project. Late last year, I set up a GitHub Pages website using Beautiful Jekyll, a template built with Jekyll static site generator. At the time, I had other projects going on, so even though the setup looked easy, I remember being in a hurry and failing to be properly assiduous. Now, returning to this project, it's much easier to sort out. I'm slowly building my site into a personal resume. I intend to display this programming log, any blog posts I write, and my projects (hopefully I can even link in a WASM page or two where my programs actually run in the browser).

I did run into some hiccups today. I uploaded the programming_log folder to the Github Pages repo and it threw an error, which I overlooked. Subsequently, any changes I made to the websites aesthetic failed to propagate. At the end of the day, I found it was because the size of the programming_log folder was too large. Evidently it was halting any further changes to the repo.

2/24/2023

Personal Website

I’m anxious to get this site up and running so I can start showcasing my work, but it’s been nothing but headaches so far! I’ve been committed to using Jekyll for the build, and yet so many templates I’ve tried have been convoluted in their implementation. I think I’ve finally found the one I’ll stick with; I’ve already made several modifications to it. Hopefully I can be done with this effort soon so I can get back to embedded systems and C++ programming.

2/25/2023

Personal Website

Well I did it; I made this programming-log repository public so I could link to it from my website. Speaking of my website, I think I've settled on the right template. I'm finding it much easier to modify according to my needs than other templates I tried. I've also added a projects page where I will place links and descriptions for my most significant projects.

2/26/2023

Personal Website

Today, I made my student-log repository public so that I could link it to my website. Before making it public, I went through and cleaned it of any information, i.e. student info, that I inadvertently included in my commits last year: I was only just getting started with git. To do this, I simply removed the .git and .gitignore files from the local directory, deleted the corresponding remote branch, and reinitialized the local directory and pushed it to a new branch.

2/27/2023

Gesture Midi Controller

Goal: to create a class for different tuning temperaments. In this class, I included my original justIntonation function and a new equalTemperament function. To accomplish this, I needed to learn how to declare a class in a header file and define the class in the related source file. I found this resource helpful.

2/28/2023

Bare Metal C

I'm on Chapter 10, Interrupts. There is a lot of technical jargon in this section, and I've not even finished reading it! I read half the chapter and implemented the example program today. Here's my key takeaways:

- Polling and Interrupts are the two main methods for handling I/O. Polling is easy to understand and implement but suffers in efficiency; Interrupts are hard to understand and implement but are efficient. In my estimation, one is not ubiquitously better than the other. Rather, Polling is useful when a process is guaranteed to happen frequently. Interrupts are useful when a process is going to happen semi-infrequently. This is a gross simplification, but the general logic makes sense and helps reinforce the concept.

- Important Acronyms:

- TDR - Transmit Data Register

- TSR - Transmit Shift Register

- TXE - TDR Empty; IRQ - Interrupt Request

- volatile - C keyword that tells the compiler that a variable may be changed

- TXEIE - Transmit Interrupt Enable

- UART and USART - (refresher) Universal Asynchronous Receiver-Transmitter; Universal Synchronous/Asynchronous Receiver/Transmitter

To really understand the USART protocol, I'll need to experiment with it directly. Once I finish the chapter, I will look up USART tutorials specifically for STM32 products.

3/1/2023

Bare Metal C

I finished Chapter 10 today. I didn't care for the second half of the chapter like I did the first. I found it difficult to follow, not because the material was too complex, though it certainly is complex, but because several things were glossed over that I think should have been clarified more. That's okay. I like this book as a whole and I have no problem consulting other resources to fill in any gaps. Before I continue to Chapter 11, I'm going to explore UART, polling, and interrupts more thoroughly.

This article on the UART Protocol is an excellent distillation. Much of it was review for me, but it also gave me some new mind maps to use when thinking about UART. I really want to get a firm grasp on serial communication. Here are some steps I can take:

- Implement my own STM32 program that sends and receives packets via UART on the Nucleo MCU.

- Find a device with an embedded system and see if I can connect to it via UART and read its log data.

3/2/2023

Exploring the Job Market

I stayed up way too late last night combing the internet for practical examples of embedded systems being used in the field. I browsed job listings to get a sense of what I might be aiming for, and I went on Reddit to get people's personal accounts. I got a little discouraged; everyone was talking about how difficult it is to get started in embedded systems and to get into the field as a professional. To lift my spirits, I explored other realms of development I could get into. I stumbled upon this repository, and it got me thinking that I ought to start contributing to open source projects if I can. It would be practical way to hone my programming skills since I'd be working on real-world projects. Perhaps I can make this effort the primary way I practice C++.

I also watched a couple of Jacob Sorber's videos on embedded systems this morning, and I regained my enthusiasm for the subject. I know I need to keep pursuing embedded systems; I just need to adjust my current learning process. Yesterday, I said I would linger on UART for a while before proceeding to Chapter 11 in Bare Metal C. Now, I think I'll move on to Chapter 11 sooner than later and plan on returning to the topic of serial com, among other things, when I begin the STM32 class on Udemy.

C++20 Masterclass